A lightweight, predictable and dependable kernel for multicore processors

Quest

Quest can be configured to run with various capabilities. In its basic form it supports symmetric multiprocessing (SMP), with a single system image running on all available processors or cores.

VCPU Scheduling

In Quest, virtual CPUs (VCPUs) form the fundamental abstraction for scheduling and temporal isolation of the system. The concept of a VCPU is similar to that in virtual machines, where a hypervisor provides the illusion of multiple physical CPUs* (PCPUs) represented as VCPUs to each of the guest virtual machines. Quest VCPUs are much simpler than in traditional hypervisors, since they are not needed to cache instruction blocks to emulate sensitive instructions that can impact the behavior of separate virtual machines. Instead, Quest VCPUs exist purely to account for time spent by threads executing on PCPUs, and to prioritize the execution of different thread groups.

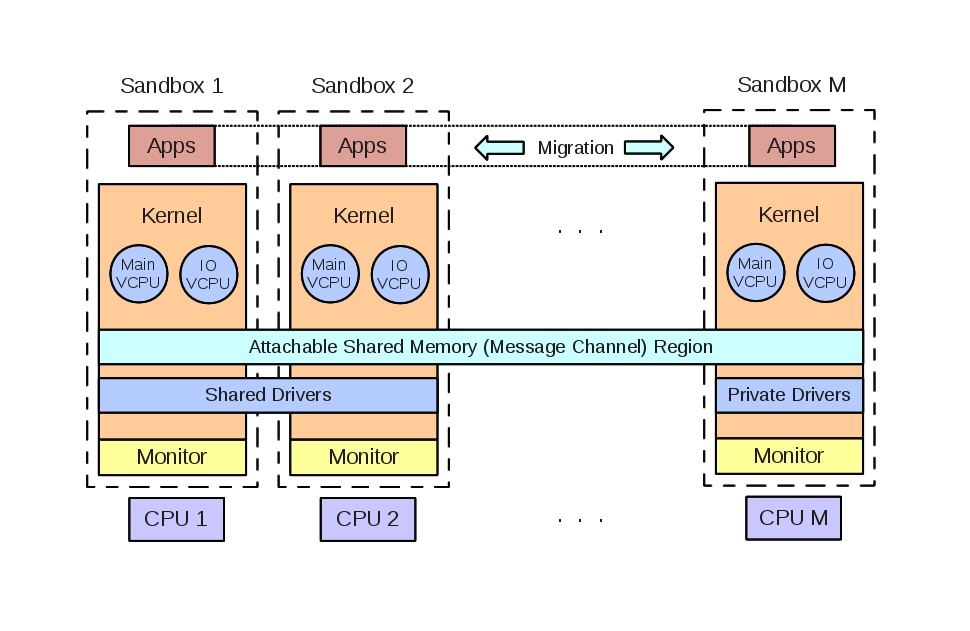

VCPUs exist as kernel abstractions, to simplify the management of resource budgets for potentially many software threads. We use a hierarchical approach in which VCPUs are scheduled on PCPUs and threads are scheduled on VCPUs, as shown below. Two classes of VCPUs are defined by default: (1) Main VCPUs, which are used to schedule and track the PCPU usage of conventional software threads, and (2) I/O VCPUs, which are used to account for, and schedule the execution of, interrupt handlers for I/O devices. This distinction allows for interrupts from I/O devices to be scheduled as threads, which may be deferred execution when threads associated with higher priority VCPUs having available budgets are runnable. The flexibility of this approach allows I/O VCPUs to be specified for certain devices, or for certain tasks that issue I/O requests, thereby allowing interrupts to be handled at different priorities and with different CPU shares than conventional tasks (i.e., processes or threads) associated with Main VCPUs.

By default, each Main VCPU acts like a Sporadic Server, and is specified by a budget (in CPU cycles) and replenishment period, for when that budget is made available again. Effectively a Main VCPU looks like a PCPU operating at a reduced processing rate. By comparison, each I/O VCPU acts as a bandwidth-preserving server with a dynamically-calculated replenishment period, and budget. Each I/O VCPU requires only the specification of a bandwidth limit, or percentage of PCPU time it can use, when it executes on behalf of a given device. When a device interrupt requires handling by an I/O VCPU, the system determines the thread associated with a corresponding I/O request request that caused it to block on its Main VCPU, but which ultimately led to a device performing an I/O operation (e.g., as a result of a read request). In Quest, all events including those related to I/O processing are associated with threads running on Main VCPUs. Furthere details about how Main and I/O VCPUs operate can be found below in our technical papers.

*Here, a PCPU can be either a conventional uniprocessor, a single core of a multicore processor, or a hardware thread.

Quest-V

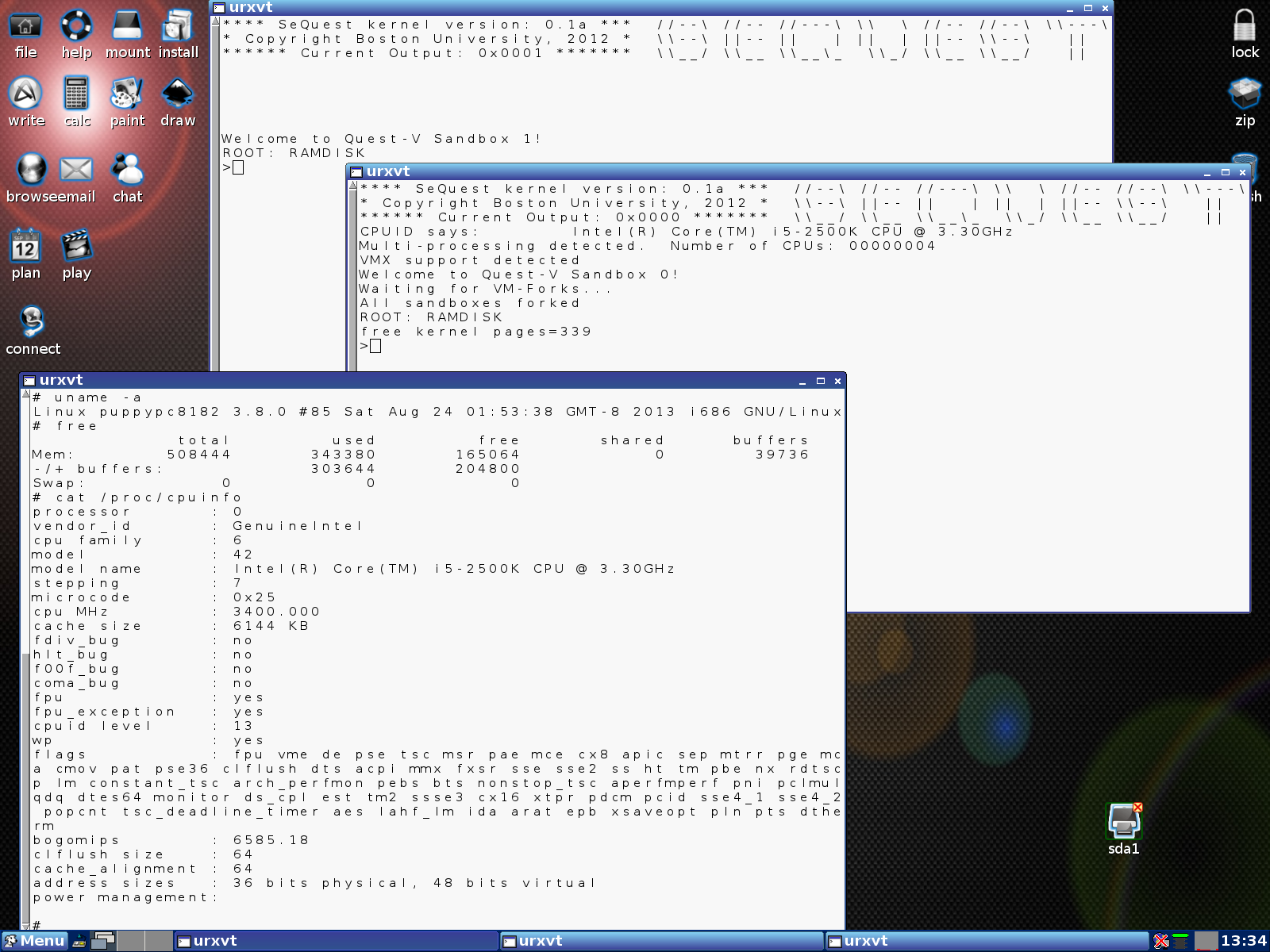

Quest can be configured to run as a virtualized multikernel, taking direct advantage of hardware virtualization features to form a collection of separate kernels operating together as a distributed system on a chip. A Quest-V multikernel is designed for high-confidence real-time systems, requiring operation in the presence of software faults. Quest-V uses virtualization techniques to isolate kernels and prevent local faults from affecting remote kernels. A virtual machine monitor for each kernel keeps track of extended page table mappings that control immutable memory access capabilities.

In Quest-V, device interrupts are delivered directly to a kernel, rather than via a monitor that determines the destination. Apart from

bootstrapping each kernel, handling faults and managing extended page tables, the monitors are mostly not needed. In special cases, they may be required to setup communication channels by manipulating extended page table mappings across sandboxes, or to assist in migrating address spaces, but otherwise, each sandbox kernel operates without requiring frequent guest/monitor (VM-Exit and Entry) transitions.

The Quest-V approach differs from conventional virtual machine systems in which a central monitor, or hypervisor, is responsible

for scheduling and management of host resources amongst a set of guest kernels. In Quest-V, each sandbox schedules threads onto time-budgeted virtual CPUs (VCPUs), which in turn are mapped onto physical cores. The whole approach is one that provides space-time partitioning of machine physical resources between and within sandboxes.

An Overview of the Quest-V Multikernel

Virtualization Features

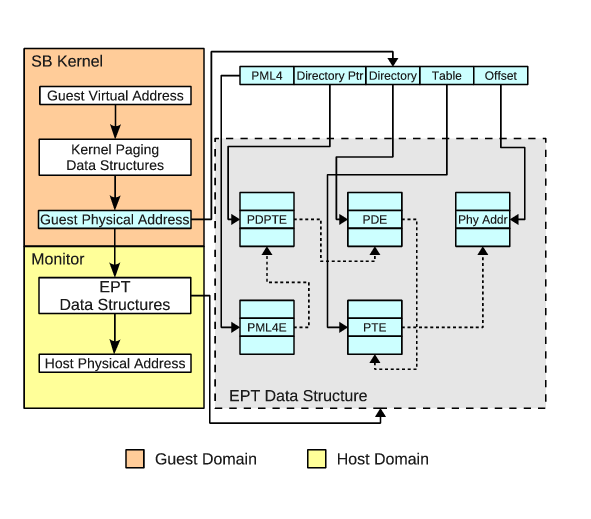

Quest-V currently uses extended page tables (EPTs) found on

Intel VT-x processors, although similar Nested Page Table

(NPT) capabilities on AMD-V processors should be easily

supported. EPTs are used to isolate the memory regions of

sandboxes down to 4KB granularities. For each 4KB page we have

the ability to set read, write and even execute permissions.

Consequently, attempts by one sandbox to access illegitimate

memory regions of another will incur an EPT violation, causing

a trap to the local monitor. The EPT data structures are,

themselves, restricted to access by the monitors, thereby

preventing tampering by sandbox kernels.

EPT Mappings in Quest-V

EPT support alone is actually insufficient to prevent faulty

device drivers from corrupting the system. It is still

possible for a malicious driver or a faulty device to DMA into

arbitrary physical memory. This can be prevented with

technologies such as Intel's VT-d, which restrict the regions

into which DMAs can occur using IOMMUs. However, this is still

insufficient to address other more insidious security

vulnerabilities such as ``white rabbit'' attacks [1].

For example, a PCIe device can be configured to generate a

Message Signaled Interrupt (MSI) with arbitrary vector and

delivery mode by writing to local APIC memory. Such malicious

attacks can be addressed using hardware techniques such as

Interrupt Remapping (IR). Having said this, the focus of our

work is predominantly on fault isolation and safety in trusted

application domains, rather than security in untrusted

systems.

[1] R. Wojtczuk and J. Rutkowska, "Following the White

Rabbit: Software attacks against Intel VT-d Technology", April

2011, Inivisible Things Lab.

Quest-Linux, Separation Kernels and MILS

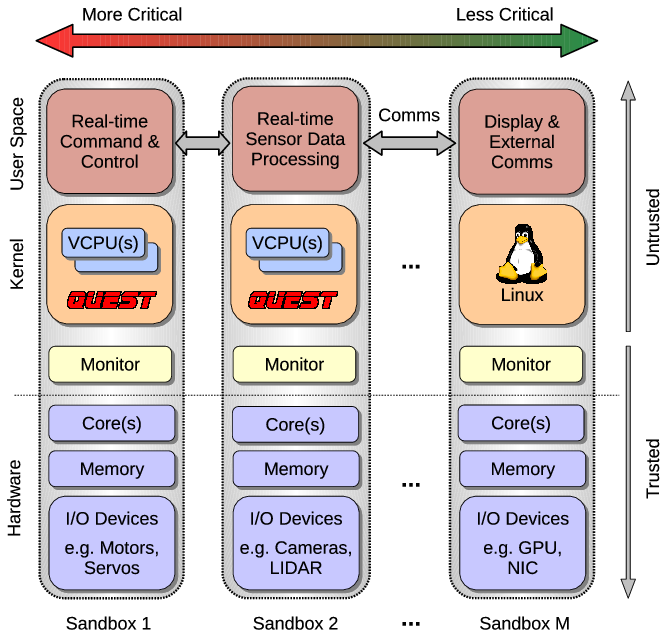

The Quest-V multikernel replicates kernel images across sandboxes. It is possible to build a Quest multikernel without hardware virtualization, but this has implications for the extent to which one kernel is isolated from another. Conventional page-based virtual memory or segmentation offered by processors with MMUs (memory management units) or MPUs (memory protection units) can be used to protect the memory regions of different kernel instances. However, without hardware virtualization capabilities it is more challenging to restrict the access of separate kernels to specific I/O devices and machine processors or cores. That said, a Quest system can be implemented as a separation kernel (in the spirit of John Rushby's definition [2]), by isolating separate services in different sandboxes that are connected via well-defined communication channels. The severance of these channels should ideally leave no implicit, or covert, channels for communication. Using hardware virtualization, a Quest separation kernel can be established with a series of real-time sandboxes connected via secure communication channels to a Linux front-end.

We currently support the configuration of a Quest-Linux separation kernel, allowing real-time services and device management in Quest sandboxes, along with explicit communication to a Linux front-end in a separate sandbox. This allows native Linux applications to co-exist on a system with real-time services, with temporal and spatial isolation between Linux and Quest functionality. In this manner, we are able to support multiple independent levels of security (MILS).

While other systems have been developed as separation kernels (e.g., PIkeOS from SYSGO), they invariably rely on hypervisors to schedule the execution of guest virtual machines, or sandboxes. In our approach, monitors are used to bootstrap sandboxes, establish communication channels between them, and aid in recovery of failed sandboxes. With Quest, it is actually possible to statically establish communication channels at boot-time and thereby remove system monitors altogether (assuming fault recovery is not needed), thereby realising a separation kernel in its purest form on a multi-/many-core processor. Virtualization becomes integral to the isolation of kernel services without requiring involvement of a trusted hypervisor to manage resources at runtime.

[2] John Rushby, "The Design and Verification of Secure Systems," Eighth ACM Symposium on Operating System Principles, pp. 12-21, Asilomar, CA, December 1981. (ACM Operating Systems Review, Vol. 15, No. 5).